DANCEHACK

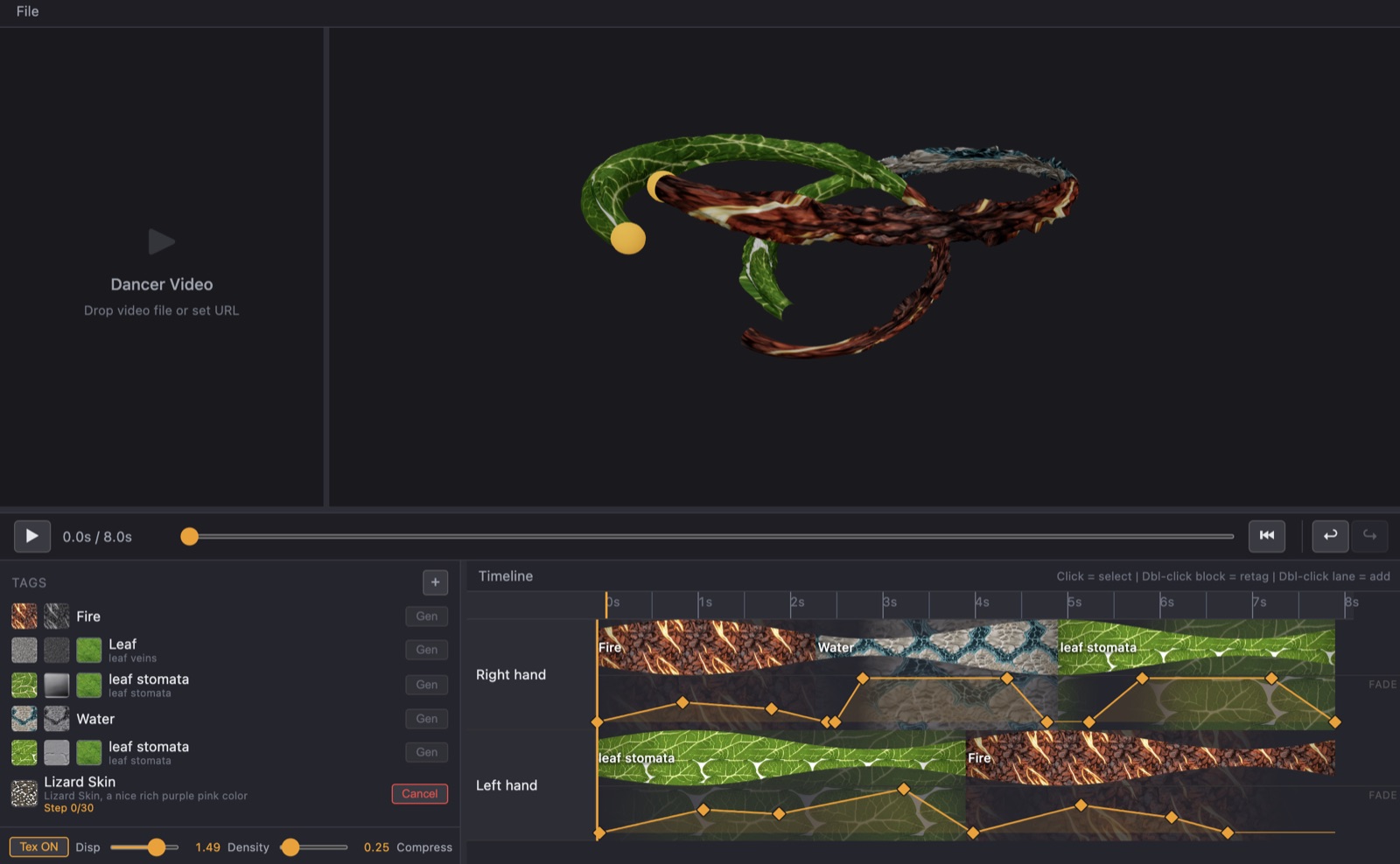

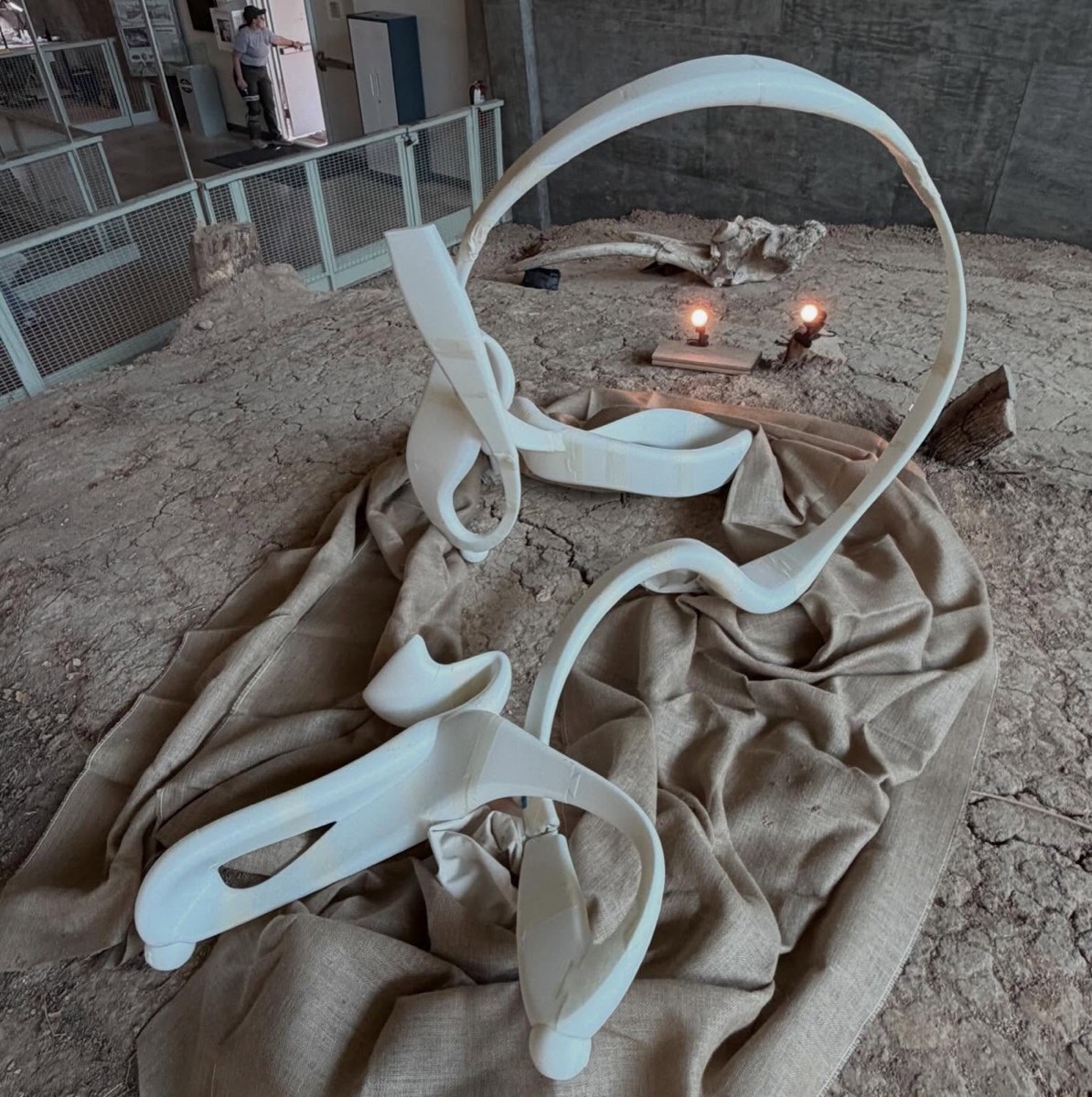

Texture-painting timeline for dancer motion. Built at Dancehack 2026 under choreographer Merli Guerra.

A custom tool for translating recorded dancer movement into texturable 3D geometry that can be re-animated and re-skinned for video and installation. The dancer's path becomes a brushstroke; an NLE-style timeline picks what it's painted with. Tag the strokes, hit Gen, and a local diffusion pipeline produces tileable albedo and PBR maps for each tag. Built during Dancehack 2026 — an interdisciplinary hack week pairing engineers with choreographers — with artist Merli Guerra as the project lead.

Stack

- React + Vite + TypeScript

- Frontend shell — the timeline, tag library, viewport overlays.

- react-three-fiber + drei + three.js

- 3D viewport. Each path is a tube; tube radius is driven by per-point velocity so the geometry encodes movement energy before any texture lands on it.

- Custom NLE timeline

- One track per path; tagged segments per track; per-track fade-keyframe lane for displacement/opacity over time. Built from scratch — no library quite matched the segment-plus-keyframe model.

- FastAPI generator service

- Local Python service for texture generation, called per-tag from the frontend.

- SDXL Base 1.0 + IP-Adapter

- Albedo (color) generation from text prompt + optional reference image.

- Circular-padded UNet + VAE

- Modifies SDXL's conv stack so left-edge attends to right-edge — produces seam-free horizontally tileable textures on the first try, no manual seam-fix pass.

- CHORD (Ubisoft La Forge)

- Neural PBR estimation — albedo → normal, height, roughness. One pass per tag.

- ComfyUI workflows

- Offline: video → PLY point cloud and batch images → PLY, for the path-data ingestion side.

Process

The brief from Merli was: capture a dance, get something I can re-skin and re-light. The first decision was the abstraction — should the tool consume mesh, or path data? Mesh closes off authoring; paths leave the geometry generative. So the app's input contract is { id, name, points: { t, x, y, z, velocity }[] } and the tubes are built per-frame. The mesh→paths conversion is upstream, in ComfyUI workflows that consume captured video.

The artist already thinks in After Effects and Premiere, so the authoring surface is a familiar NLE: tagged segments on tracks, fade keyframes underneath. That keyframe lane drives displacement / opacity over time, so a single tube can fade between two textures without leaving the timeline. Everything to the right of "Author" in the pipeline is intentionally invisible — the diffusion stack only surfaces as a Gen button per tag and an inline progress counter.

Tileability is where the texture stack earns its keep. Wrap a non-tileable albedo around a tube and the seam is the first thing you see. Circular padding on SDXL's UNet + VAE modifies attention inside the conv stack so the left edge attends to the right — the model never "knows" it's painting a finite rectangle. Result: tileable on the first generation, no inpaint pass, no manual seam fix. IP-Adapter conditions on a reference image when the artist drops one, so a Lizard Skin tag actually looks like the reference photo, not just the prompt.

PBR is delegated to CHORD (Ubisoft La Forge) rather than asked of the artist. The handoff is: albedo from SDXL → CHORD → normal, height, roughness in one pass. The artist never sees a material editor.