BAS

A single body, photographed in profile, broken across depth and projected back onto milled relief. Installed at Gray Area Grand Theater · BYOB · April 7, 2026.

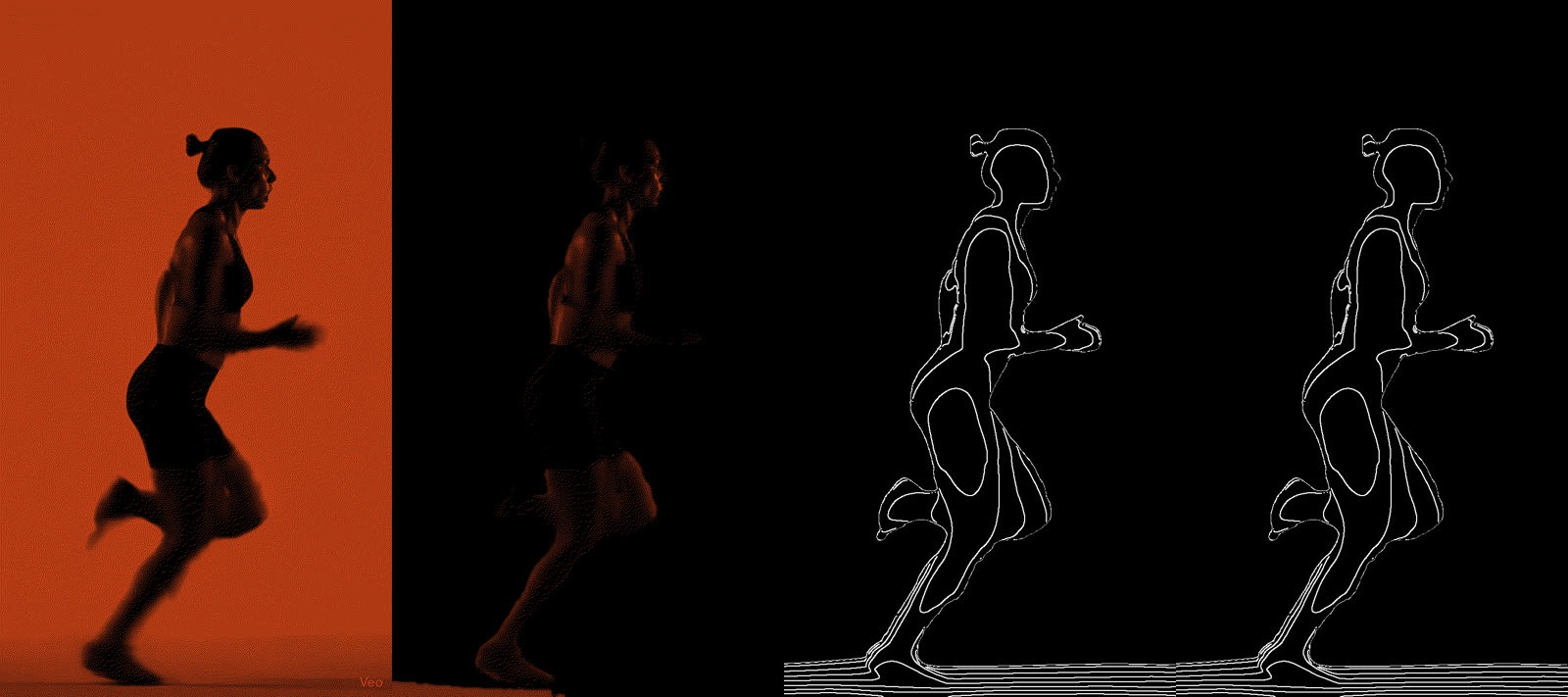

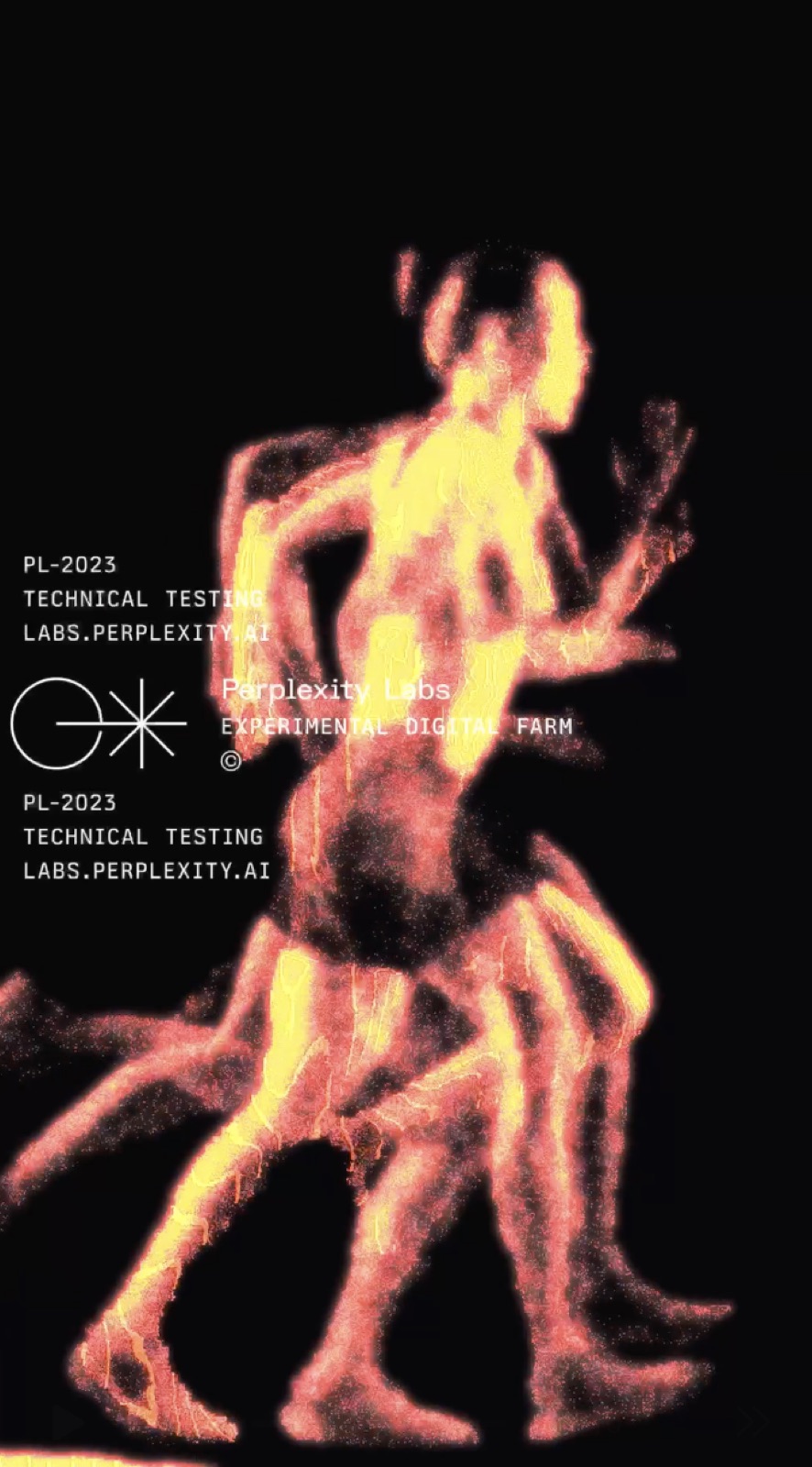

A chronophotograph held in one frame — Marey's interval, Muybridge's stride — rendered as surface and light. A runner is photographed in profile, the figure is broken across depth, and the depth field becomes a milled relief. The relief is keystoned with a layered projection that recomposes the body in real time. A hardware MIDI controller drives the layer mix from the gallery floor.

Stack

- Google Veo

- Generated motion footage. Replaced the original MediaPipe-tracked source after pose tracking turned out to be the wrong abstraction (see Process).

- MediaPipe pose tracking

- First-pass source — captured 2D pose from real footage. Abandoned.

- Stream Diffusion

- Real-time diffusion pass for the projected look studies.

- Blender

- Depth-map authoring; turning the figure into a CNC-ready surface.

- Fusion 360

- Toolpath generation for the relief.

- ShopBot CNC

- Cut at gallery scale on sheet stock — one panel, one pass, no tiling.

- TouchDesigner

- Runtime compositor. Operator network stacks dithered passes, depth-derived isophotes, and pixelation thresholds; output is keystoned onto the relief.

- Hardware MIDI controller

- 9-channel out into TouchDesigner. Sits in the gallery as a public input — visitors recompose the projection live.

- DaVinci Resolve

- Grade pass — push contrast, crush midtones, tune chroma to read off the milled surface at gallery throw distance.

Process

The piece is the back half of a long R&D thread. The front half was getting the source motion right and then figuring out how to hold motion in a static object.

Source: MediaPipe → Google Veo. The first version captured a real runner with MediaPipe and reconstructed a profile silhouette per frame. The tracking worked, but the output was a stack of pose keypoints — useful for analysis, the wrong primitive for sculpture. Switching to Google Veo gave generated footage that already was the figure in profile — full depth, controllable phrasing, consistent lighting from clip to clip. The piece is about rendering motion as surface; starting from generated motion let surface stay the focus.

Mold studies → direct CNC. The first fabrication path was 3D-print the relief, ABS-vapor smooth, pour a silicone mold, cast in resin. Surface quality was there. Print volume was not — scaling to gallery panels would have meant tiling the mold and chasing seams. Abandoned for a direct CNC pipeline: depth map → Fusion 360 toolpath → ShopBot on sheet stock. One panel, one pass.

Runtime: TouchDesigner with a MIDI surface. The relief is static — the projection isn't. A TouchDesigner operator network stacks layers (dithered halftones, pixelation thresholds, contour lines, depth-derived isophotes). A 9-channel hardware MIDI controller crossfades the stack live; the same controller sits on the gallery floor as a public input so visitors can author the projection while looking at it. The source video plays in profile beside the relief — the body in the panel and the body on screen meet in the same beat.

The full process narrative — early experiments, abandoned mold path, controller build, grade pass — lives at bas.run.